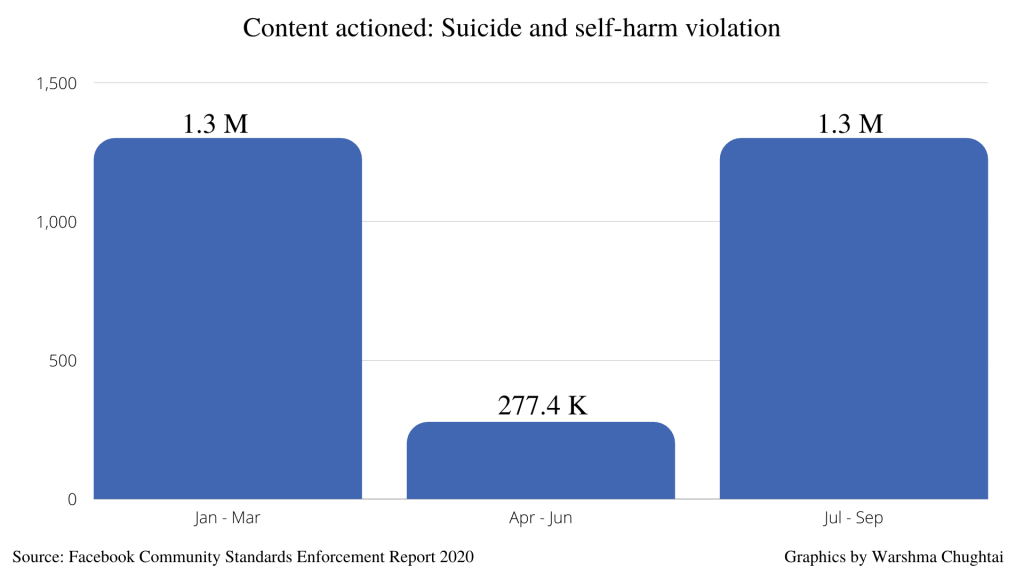

Instagram removed significantly less suicide and self-injury violations from April to June, compared to other quarters, as per reports by parent-company Facebook.

The community standards enforcement report indicates that 1.3 Million pieces were removed in January to March and July to September, whereas 80% less content was removed from April to June.

With the country facing peak pandemic during these months, cases of mental health also saw a stark rise.

Zakia, 20, said: “With the pandemic going on mental health discussions are becoming increasingly necessary. I feel Instagram should have implemented a swift strategy in removing these posts instead of designating specific months to withdraw potentially disturbing content. For me, it almost seems like an excuse for not sorting it out at the height of the pandemic.”

Most of their workers were sent home during the lockdown. Facebook said it had prioritised the removal of the most harmful content. However, other violation categories did not see a drastic difference.

Even suicidal and self-harm category on Facebook did not see a radical difference with only 30% less action taken.

Avid Instagram user, Saad Habib, 24, said: “I don’t understand on what basis they are prioritising. To me, adult content and cyber-bullying are as bad as suicidal and self-harm content.”

Since most of the workers have been called back to the office, the number of removals has gone back to pre-Covid levels.

Reacting to appeals for better content moderation, Instagram has updated their Terms of Use to make them clearer and said that these terms will be affected from December. It has also launched a software tool to recognise and remove self-harm and suicide content on its app in the UK and Europe.

To read more on mental health issues during the pandemic:

Beating winter blues – the effects of SAD on mental health

Student mental health ignored by universities

Lockdown Diaries #3: self-validation on social media

Words & Picture: Warshma Chughtai | Subbing: Deborah Melchiorre